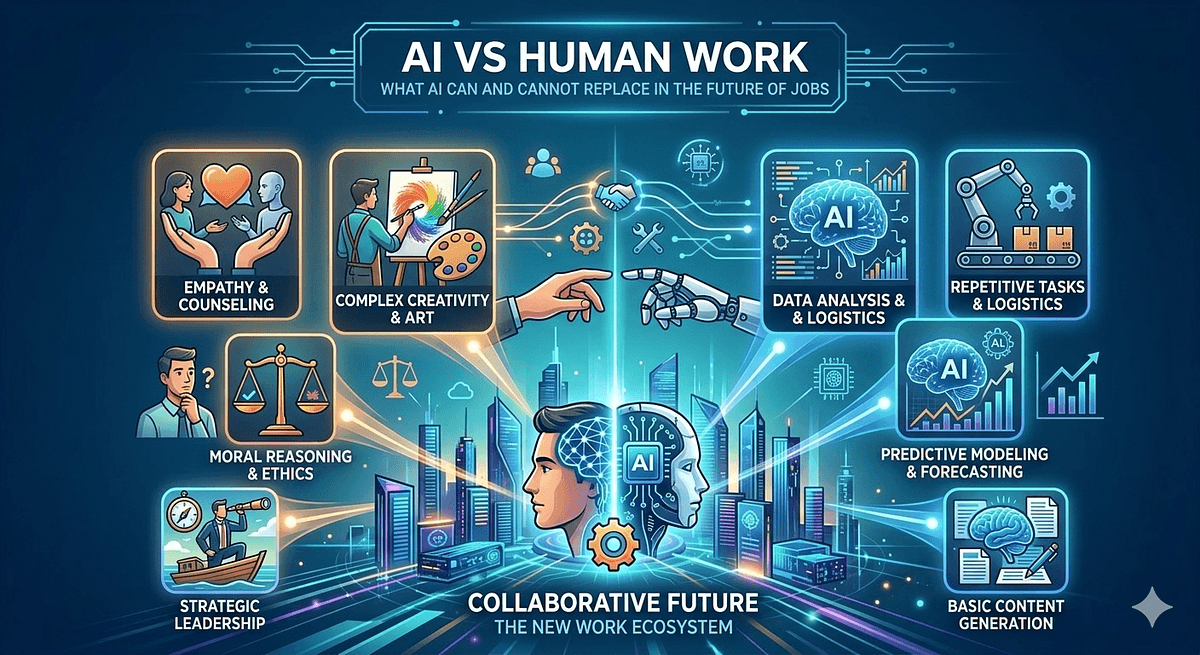

The question is no longer whether AI will change the nature of work. It already has. The more important question, the one that actually affects your career decisions, your hiring strategy, and your business planning, is which specific types of work AI can and cannot replace, and on what timeline. The debate between AI vs human work is often framed as a binary: AI wins or humans win. The reality is more nuanced and considerably more useful to understand. This article maps the current state of AI capability honestly, identifies where human work remains genuinely irreplaceable, and outlines what the future of AI jobs actually looks like for people navigating this shift right now.

To understand the impact of AI on jobs, it helps to distinguish between task automation and job automation. Very few entire jobs have been eliminated by AI. A much larger number of tasks within jobs have been automated, compressed, or made redundant. The pattern is consistent across industries: AI is most effective at work that is high-volume, rule-based, and does not require real-time contextual judgment or physical presence.

Document processing is one of the clearest examples. AI now reads, classifies, extracts data from, and routes documents at a scale and speed that human teams cannot approach. Legal discovery, insurance claims processing, financial statement analysis, and medical record triage are all areas where AI has substantially reduced the human hours required to handle large document volumes. McKinsey's 2025 AI report estimated that document processing is among the top three most automated work categories globally.

Customer service at the tier-one level is another clear automation zone. Tools like Intercom Fin, Salesforce Agentforce, and Zendesk AI resolve over half of incoming support tickets without any human involvement. Password resets, order status queries, FAQ-level information, and standard refund processing are handled autonomously. The net effect is not the elimination of customer service departments but a significant reduction in the headcount required to manage equivalent ticket volumes.

Code generation is arguably the domain where AI replacing jobs has been most dramatic and most rapid. Over 56 percent of engineers now complete 70 percent or more of their coding work with AI assistance, according to the Pragmatic Engineer's 2026 survey. Boilerplate generation, test scaffolding, documentation, and straightforward feature implementation are now largely AI-assisted tasks. The implication is not that developers are disappearing, but that the floor for what a developer is expected to produce individually has risen significantly.

Data reporting, translation of standard commercial content, basic graphic design for templated formats, transcription, and scheduling and calendar management all follow the same pattern. The common thread is that these tasks involve applying defined rules to large input volumes, and AI does that better, faster, and more consistently than humans in most measurable respects.

The narrative that AI is an unstoppable force replacing all human work misrepresents where AI reasoning actually breaks down. AI systems are not general reasoners with human-like understanding. They are sophisticated pattern matchers that have been trained on enormous amounts of text and data. That distinction matters practically because it defines a clear set of conditions under which AI performance degrades significantly or fails entirely.

Novel problems that lack clear precedent are one such condition. When a situation requires reasoning from first principles about something genuinely new, rather than applying patterns from historical examples, AI systems produce responses that sound plausible but are structurally unreliable. This is the hallucination problem in its deepest form: the model generates confident-sounding text because that is what it was trained to do, not because its conclusion is grounded in verified reasoning.

Causal reasoning is a second, related limitation. AI systems are very good at identifying correlations in data and very poor at reliably distinguishing causation from correlation in ambiguous real-world situations. A data analyst using AI to identify patterns in customer behavior still needs human judgment to determine whether a correlation reflects a genuine causal relationship or a confounding variable. The AI finds the pattern; the human determines whether the pattern means what it appears to mean.

Accountability and legal responsibility are structural, not technical limitations. When an AI system makes a decision that harms someone, there is currently no AI entity that can be held legally responsible. The humans and organizations who deploy AI systems retain accountability for the consequences of those systems. This is why fully autonomous AI decision-making remains legally and ethically constrained in domains including healthcare, finance, law, and public infrastructure, regardless of how technically capable the underlying models become.

The question of AI vs human creativity is one of the most contested areas of the jobs debate, and it is important to be precise about what is actually being compared. AI can generate content that is technically competent, stylistically varied, and often indistinguishable from human output at the surface level. What AI cannot do is generate creative work from a genuine lived perspective, from a personal stake in the outcome, or from original experience that is not present in its training data.

This distinction is commercially significant. AI can produce a technically competent marketing campaign. It cannot produce a campaign rooted in a brand's genuine cultural position, a specific founder's authentic voice, or a creative insight that emerges from deep first-hand knowledge of a community. The creative work that is most defensible from AI automation is precisely the work that is most grounded in specific human experience, perspective, and accountability.

Research published in Harvard Business Review found that AI-generated creative content consistently scores higher on technical execution metrics but lower on originality and emotional resonance with target audiences compared to equivalent human-generated work. The practical implication is that AI is a powerful tool for the execution layer of creative work but a weaker substitute for the strategic and conceptual layers where genuine creative judgment lives.

Looking across the evidence from both AI capability benchmarks and labor market data, several categories of human work emerge as structurally durable against AI automation in the foreseeable future. These are not temporary safe harbors that will close as AI improves. They are categories where the human element is constitutive of the value, not merely instrumental to it.

Trusted advisory relationships fall into this category. A patient being told a serious diagnosis needs a human present who can respond to their emotional reality in real time and bear genuine responsibility for the guidance they provide. A client in a high-stakes legal negotiation needs a lawyer whose professional reputation and legal license are on the line. A board member needs an advisor whose personal credibility and network are inseparable from the advice. These roles are not automated by making the advice technically correct; the relationship and accountability structure is integral to the service itself.

Skilled physical work in complex environments is another structurally durable category. Plumbers, electricians, surgeons performing complex manual procedures, and skilled tradespeople working in physically variable environments all perform work that requires fine motor dexterity, real-time physical problem-solving, and embodied situational judgment. Physical AI robotics is advancing, but deploying reliable general-purpose physical robots in unstructured human environments remains a significant unsolved engineering challenge.

Ethical and governance leadership is a third durable category. Decisions about what an organization values, how it treats its people, what risks it will accept, and how it responds to moral complexity are fundamentally human responsibilities. An AI system can model the consequences of different decisions. It cannot bear moral responsibility for them, and organizations increasingly require leaders who can articulate and defend value-laden choices to regulators, employees, and the public.

The WEF Future of Jobs Report 2025 projects 85 million job displacements alongside 97 million new job creations by 2030. The net addition is real, but the transition is not automatic. The jobs being created are concentrated in AI development and oversight, data infrastructure, green energy, and care sectors. The jobs being displaced are concentrated in administrative processing, routine customer interaction, and entry-level analytical roles. These populations often do not overlap in skills or geography.

The empirical picture at the firm level is nuanced. MIT economist David Autor's research found that occupations most exposed to AI automation have seen employment growth, not decline, in aggregate so far, because AI is augmenting worker productivity enough to expand demand for the service rather than simply replacing the workers who provide it. However, this pattern is concentrated in higher-skill occupations. Routine-task workers in lower-wage roles are showing wage compression and reduced employment in several studied sectors.

The most defensible career strategy that emerges from this data is not to identify a job category AI cannot reach but to develop a skill profile centered on directing, evaluating, and collaborating with AI systems alongside the relationship, judgment, and accountability capabilities that remain structurally human. The highest-value professional in most fields in 2026 is not the one who avoids AI but the one who uses it most effectively while contributing the human dimensions that AI cannot replicate.

The AI vs human work debate is most useful when it moves from abstraction to specifics. AI is automating the execution layer of knowledge work rapidly and measurably. Human work is most defensible where accountability, embodied judgment, relational trust, and genuine creative perspective are constitutive of the value being delivered, not incidental to it. The future of AI jobs is not a story of replacement but of transformation: the same roles exist but with different task distributions, higher expectations for output, and AI fluency as a baseline professional requirement.

The most productive response to this landscape is the same for individuals and organizations: audit the task composition of your current work or workforce, identify what proportion is in the automated zone versus the durable human zone, and invest deliberately in the capabilities that sit firmly on the human side of that line. The AI limitations that matter most today are not permanent features. The human capabilities that matter most are not accidents. Understanding both clearly is the most valuable professional intelligence available in 2026.