When ByteDance launched Seedance 2.0 in February 2026, it did not simply release another AI video tool. It triggered cease-and-desist letters from Disney and Paramount, sent realistic clips of Brad Pitt and Tom Cruise in an AI-generated rooftop fight scene viral across the internet, and prompted serious debate about the future of professional video production. For content creators, marketers, and media professionals, Seedance 2.0 represents a step change in what a single person with a modest budget can produce. Understanding what it does, how it works, and where its limitations lie is now a professional necessity rather than a curiosity.

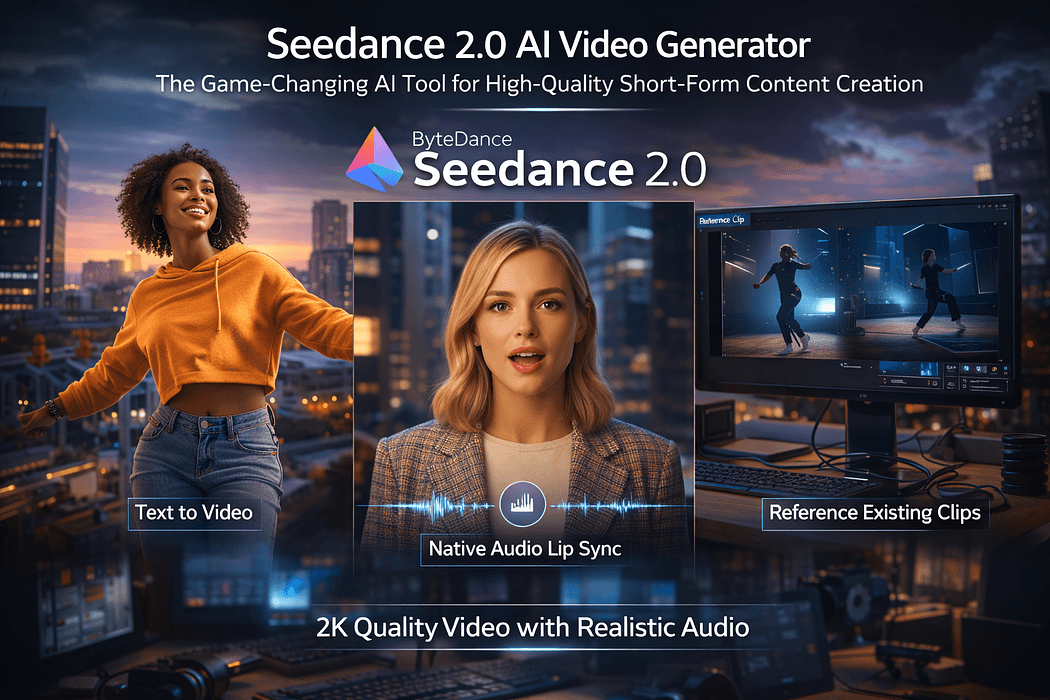

Seedance 2.0 is ByteDance's most advanced AI video generation model, built on a unified multimodal audio-video joint generation architecture. Unlike earlier text to video AI tools that accepted a single prompt and returned a single continuous clip, Seedance 2.0 accepts text, images, audio, and video as simultaneous inputs and generates multi-shot cinematic sequences with natively synchronized audio in a single generation pass.

The model is developed by ByteDance's Seed research team, the same organization behind TikTok's algorithmic infrastructure. ByteDance reportedly hired a former Google DeepMind vice president to lead foundational AI research and poached senior engineers from Alibaba and various AI startups, pairing that talent with significant infrastructure investment to build a model capable of competing directly with OpenAI's Sora and Runway at the highest capability tier.

As of February 2026, Seedance 2.0 is available via ByteDance's Jianying platform under the name Xiaoyunque for users with a Chinese Douyin ID, and through third-party API platforms including fal.ai for international developers. The model's full API launch has been announced with broader rollout expected imminently.

Seedance 2.0 delivers output up to 2K cinematic resolution, with native 1080p as the standard production format. The model supports six aspect ratios including 16:9, 9:16, 1:1, 4:3, 3:4, and 21:9, making it directly compatible with the format requirements of TikTok, Instagram Reels, YouTube Shorts, widescreen advertising, and cinema-format productions without requiring post-production reformatting.

Generation speed is approximately 30 percent faster than the previous Seedance 1.5 model, with most clips completing within two to three minutes. Videos are generated in lengths of five to fifteen seconds per clip, with the multi-shot architecture allowing a single prompt to produce what amounts to an edited sequence with distinct scenes and natural cuts rather than a single uninterrupted take.

The most technically significant feature of Seedance 2.0 is its AI video generation with audio produced natively alongside the visual output in a single pass. The model generates dialogue, ambient soundscapes, and sound effects that are synchronized to the visual content at the frame level rather than added in post-production. Music carries cinematic bass and presence, and dialogue is rendered with phoneme-level lip sync accuracy.

The AI video lip sync technology supports more than eight languages including English, Mandarin, Japanese, Korean, and Spanish. Users can also upload their own audio tracks and receive matching visuals with synchronized lip movement generated to match the provided audio, enabling dubbed content creation and localized video production at a scale and cost that conventional production pipelines cannot approach.

Seedance 2.0's unified architecture accepts up to nine reference images, three video clips, and three audio tracks simultaneously alongside a text prompt in a single generation request. This multimodal input capability allows the model to extract motion logic, camera movement, lighting style, and sound atmosphere from reference materials directly, reducing the number of prompt iterations required to achieve a specific visual outcome.

The Dual Branch Diffusion Transformer architecture underlying Seedance 2.0 enables the model to process these heterogeneous inputs and maintain character consistency, visual style coherence, and atmospheric continuity across multi-shot sequences. For creators who need a recognizable protagonist to appear consistently across an entire short-form narrative, this represents a material practical improvement over earlier AI video generation tools.

As an AI video tool for TikTok, Reels, and Shorts, Seedance 2.0 is particularly well-suited to the hook-action-payoff narrative structure that performs well on short-form platforms. A creator can describe an opening scene, a central action, and a closing beat in a single detailed prompt, and the multi-shot generation produces a sequence that matches that three-part structure with consistent visual logic throughout.

For marketing teams, the model's support for product-to-video workflows means a still image of a product can be animated into a cinematic promotional clip with motion, lighting, and environmental context added by the AI. Small businesses that previously could not afford professional video production can now generate campaign-quality content at a fraction of the cost. Reports indicate that Seedance 2.0 costs approximately half as many credits as Google's Veo 3 on comparable third-party platforms, making the economics compelling for high-volume content production.

The model's director-level camera control, supporting dolly zooms, rack focus, tracking shots, and smooth handheld movement, makes it applicable to storyboard production, pre-visualization for live-action shoots, and concept development in advertising and film. Production teams that previously needed a physical shoot to evaluate whether a creative concept worked can now generate a photorealistic preview from a text description in under three minutes.

Positioning Seedance 2.0 against its primary competitors reveals distinct capability trade-offs. In direct comparisons against OpenAI's Sora, Seedance 2.0 demonstrates advantages in multimodal reference input breadth, native audio generation, and cost per generation. Sora currently offers stronger consistency in photorealistic human subject rendering for some use cases, but its audio integration remains less comprehensive and its pricing is higher at equivalent quality tiers.

Against Runway Gen-3, Seedance 2.0 offers broader multimodal input support and native audio generation that Runway currently lacks. Runway holds advantages in its established editorial workflow integrations and its longer track record of professional deployment in commercial production environments. For creators who prioritize input flexibility and cost efficiency, Seedance 2.0 presents a compelling alternative. For those requiring deep integration with existing post-production pipelines, Runway's ecosystem maturity remains relevant.

Seedance 2.0's realism has generated significant legal and ethical scrutiny. Within days of its release, Disney and Paramount issued cease-and-desist letters to ByteDance over user-generated clips featuring copyrighted characters and likenesses. ByteDance has stated publicly that it respects intellectual property rights and committed to strengthening safeguards. Users generating content with recognizable likenesses, branded characters, or footage derived from copyrighted material face genuine legal exposure that the platform's technical capability does not eliminate.

At the technical level, the model still exhibits limitations in multi-subject consistency across longer sequences, text rendering accuracy within generated video, and complex editing effects. The 15-second maximum generation length per clip means that productions requiring longer continuous takes still need external editing to assemble sequences. AI ethics researchers have also raised concerns about deepfake potential given the model's cinematic realism, underscoring the importance of labeling and responsible deployment.

International access remains limited as of early 2026. Full access is currently gated behind a Chinese Douyin ID on the native platform, with broader international rollout in progress. Developers can access the model via third-party platforms, but the consumer-facing creator workflow is not yet available globally at the same level as competitors like Runway or Sora.

Seedance 2.0 is a genuinely significant release in the AI video generation landscape. Its unified multimodal architecture, native audio-video joint generation, phoneme-level lip sync in eight languages, and director-level camera control collectively represent capabilities that were unavailable in a single consumer-accessible tool before this release. The cost efficiency relative to comparable tools further strengthens its position for high-volume short-form content production.

For content creators and marketing teams, the practical recommendation is to begin evaluating Seedance 2.0 for short-form social content, product animation, and concept pre-visualization while remaining attentive to the evolving intellectual property and access landscape. The model rewards detailed, structured prompts that specify scene, subject, action, mood, camera movement, and audio character simultaneously, and treating initial outputs as drafts to refine iteratively produces the best results.

The wider industry implications are still unfolding. Analysts have compared Seedance 2.0's cultural impact to the moment DeepSeek demonstrated that world-class AI capability could emerge from outside the traditional Silicon Valley ecosystem. Whether that comparison ultimately proves accurate, one thing is clear: the barrier to cinematic video production has dropped substantially, and the creators who understand how to direct AI tools at this level will hold a meaningful advantage in the content economy ahead.